What Does It Mean to Be ‘Safe’?

So what does it mean to be ‘safe’? Is a company ‘safe’ because they have not had an OSHA recordable injury in say over a one year period? Does that mean they will continue to be ‘safe’ in the next year? Does it mean they are at a higher risk of an incident in the near term, because they weren’t safe…but lucky? These are the debates I read about online and as an outsider, I can see both sides. But there has to be a practical middle ground as Safety and Reliability depend on each other for their individual successes, they are interdependent.

As a career practitioner of holistic Reliability, I know I’m dipping my toe into the domain of various Safety communities, so that is always a risk, but it’s a risk I’m willing to take at this point and time. I’m an outsider, who doesn’t share in the same paradigms, just looking to learn.

I’ve read with great interest, those in Safety who advocate for the traditional behaviorist views of Safety, as well as those in the progressive Safety movement (i.e. – Safety Differently [Dekker], Safety II [Hollnagel]). Full disclosure, I’m not a Safety professional, an academic or researcher, just a career practitioner in the field of Reliability Engineering (RE).

There is a fair degree of polarization on this topic, not only between the Reliability/Maintenance and Safety communities, but also within the divided factions of the Safety community itself. It is a very ‘we’ vs ‘them’ environment. We should all be working together (not in silos), our collective effectiveness affects all of US.

ARE WE SAFE or LUCKY

I will admit that over the years, my online discussions with my Safety colleagues have swayed me to the middle on this topic. I was initially of the mind that if you give people the proper training, systems and support to do the best jobs they can, then their behaviors would be consistent with creating a safe working environment. Seems logical right?

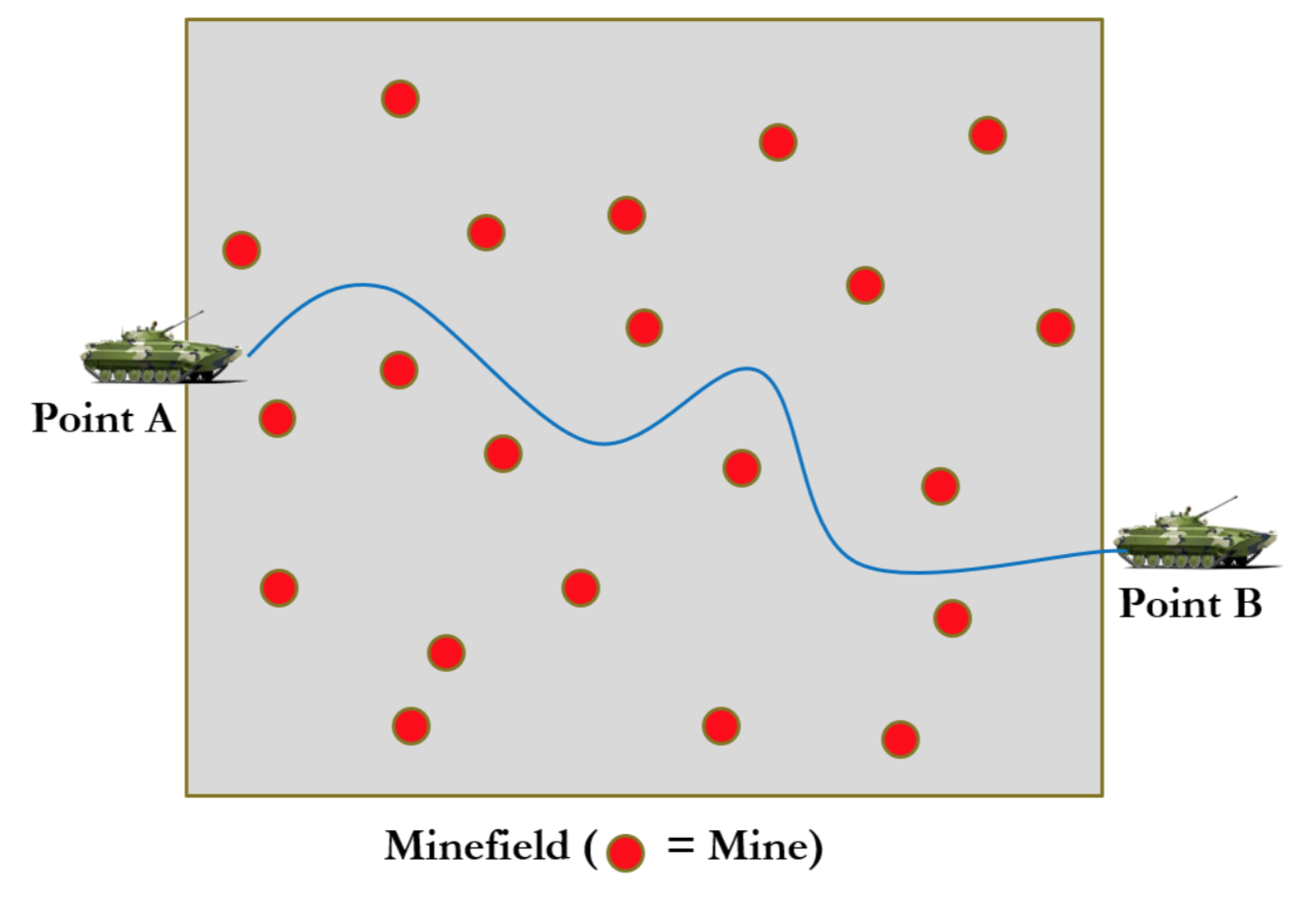

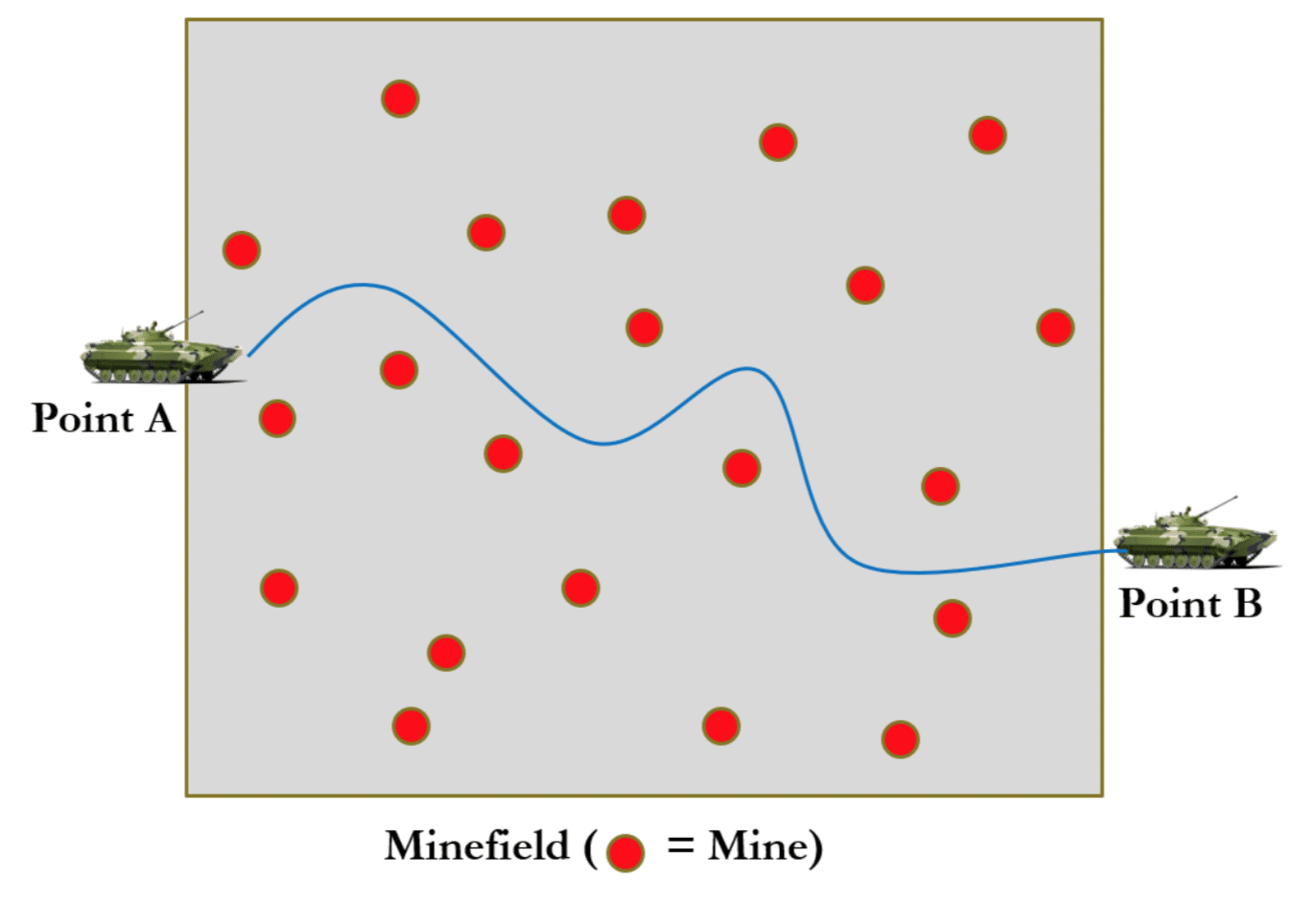

Let’s look at a couple of examples that made me re-think this perspective. Think of the concept of a minefield, where soldiers need to get from Point A to Point B. These days, they have many technologies and techniques to help with mine detection, so they slowly work to circumvent (or detonate) around the areas where they think mines are located.

In the cases where they successfully made it to Point B…does this mean they are ‘safe’? I attempted to draw up this scenario in Figure 2.

Figure 1. Minefield Example

They may be ‘safe’ in the present tense because they made it across, but that does not make the environment safe that they left behind. Future soldiers may come through that same minefield, but the minefield itself, does not make the area ‘safe’.

Say the minefield is where we work. Let’s take some leaps and say that a mine is the equivalent of a workplace hazard/risk. The ones we workaround, are the ones we know about and have mitigated via various means. The ones we don’t know about, put our safety at risk. To me, this is true anywhere.

In this scenario, the mines/hazards are static, they remain in place and are not variable. So likely their locations were mapped by the previous soldiers that successfully passed through, reducing the risk that the next soldiers will trip them (providing they follow the same ‘footsteps’ or path). This is an example of where the environment was not safe and it was worked around.

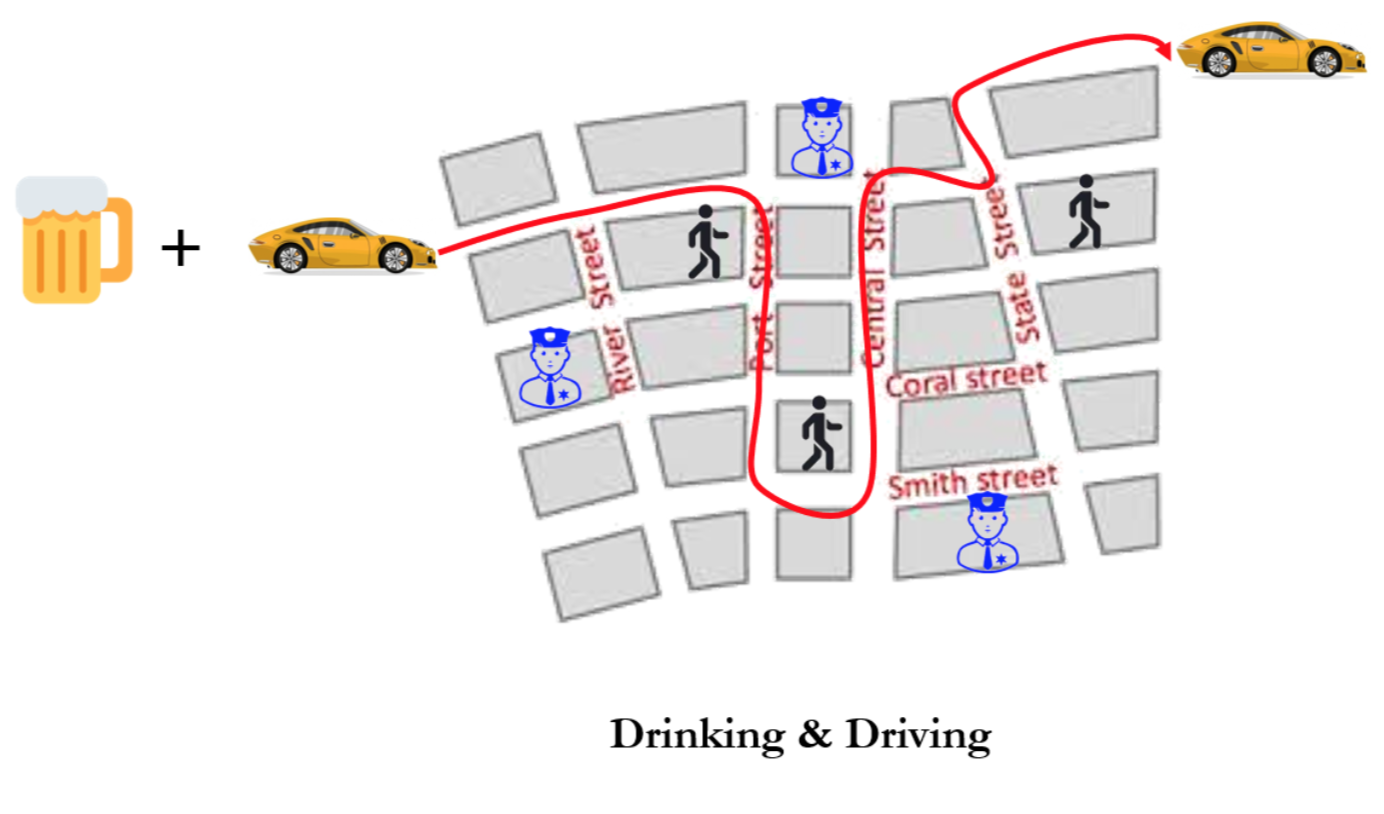

Let’s take a second scenario and look at a drunk driver who leaves a bar, and attempts to make it home. In this case, let’s say that the drunk driver makes it home without incident. Because the driver made it home, was he safe? He dodged police that were patrolling the area, as well as kids playing in the streets and other variables that are not static.

Figure 2. Drinking and Driving Example

In this scenario the ‘working environment’ was as safe as expected, but the vehicle became what was not safe. The driver actually created the hazard to those in the neighborhood by driving the vehicle while under the influence of alcohol.

So in these two scenarios, because the soldiers and the drunk driver made it to the other side, were they both ‘safe’? Were those working environment’s necessarily safe? In the minefield example, the hazards were static. In the neighborhood example, the hazards were variable. Is one ‘safer’ than the other?

In the minefield, just because they’ve never tripped one of the mines (Zero Mine Trips), does that make the minefield safer?

Because the drunk driver didn’t hit anything or anyone (Zero Damages/Zero Injuries), does that make that driver any safer? Does it make that neighborhood any safer?

USING ‘ZERO HARM’ AS A TARGET

There are many in the progressive Safety communities who have a clear disdain for those that choose to have campaigns focusing on ‘Zero’ anything, including and most importantly ‘Zero Harm‘. Their rationale is understandable and logical with the basis being that 1) ‘0’ is unattainable and therefore unrealistic to expect it will ever be accomplished and 2) once such a target is set, it is subject to manipulation in order for it to appear to be attained. I can say in my own experience, both of these ring true to me, but that is not justification that I should not aspire for ‘0’.

Personally, I believe ‘0’ is an admirable aspiration and worthy of a commitment (as opposed to setting tolerances like I will only harm a certain number of people, so the tolerance is acceptable). However, when we set it as a target metric, such metrics generally have some type of performance bonuses attached to them. I am against any such performance metrics tied to attaining a ‘0’ target because under those conditions, one will indeed never attain ‘0’ and people will tend to manipulate the numbers to get the incentive. In the end, this will also result in what I call Facebook Safety, or the illusion that we are safer than we are, when in fact that illusion is created by people choosing to under-report in order to make the numbers.

“Zero commitment, in other words, is worth striving for. A zero target is not”.

MEASURING SUCCESSES VS FAILURES

Many try to put a positive/proactive spin on how to properly measure the effectiveness of any Safety initiative. They advocate the measurement of the presence of positives or successes (desirable behaviors and environments) versus the absence of negatives or failures (after-the-fact injuries or other bad outcomes). Certainly the successes are proactive in nature and more positive sounding, as opposed to the frequency of reactive failures. They are also touted as being leading metrics vs lagging metrics (which is another article).

To me, it is very hard to measure ‘successes’. This is because when we do RCA’s on undesirable outcomes, it’s hard enough getting team members to participate because they are so busy (being reactive). Also people tend to distance themselves from an RCA because it is often associated with a negative event and may tend to blame people. Undesirable outcomes may account for maybe 1% of the total possible outcomes.

Given the above, if I try and measure successes, how do I effectively do that? If it’s hard to find the resources to work on the 1% of the failures, how do I find the resources to participate on teams, when no failure has occurred and there is no urgency? Even if I did have the resources, how would I prioritize them? I’d love to see such an analysis if anyone could provide one (thanks in advance).

In the end, another issue I don’t understand, is if we were measuring successes and things were improving, wouldn’t that surface as a reduction in bad outcomes anyway? Aren’t they inversely proportional? I’m not a mathematician, but it seems the more reliably we operate, the fewer failures we would have.

I’m open to hearing how my logic may be flawed (and I welcome the discussion) as I want to learn what I’m missing and how to build a more solid position.

About the Author

Robert (Bob) J. Latino is former CEO of Reliability Center, Inc. a company that helps teams and companies do RCAs with excellence. Bob has been facilitating RCA and FMEA analyses with his clientele around the world for over 35 years and has taught over 10,000 students in the PROACT® methodology.

Bob is co-author of numerous articles and has led seminars and workshops on FMEA, Opportunity Analysis and RCA, as well as co-designer of the award winning PROACT® Investigation Management Software solution. He has authored or co-authored six (6) books related to RCA and Reliability in both manufacturing and in healthcare and is a frequent speaker on the topic at domestic and international trade conferences.

Bob has applied the PROACT® methodology to a diverse set of problems and industries, including a published paper in the field of Counter Terrorism entitled, “The Application of PROACT® RCA to Terrorism/Counter Terrorism Related Events.”

Title Picture Source [Shark]: https://ftw.usatoday.com/2019/07/great-white-shark-swims-dangerously-close-to-surfers

Recent Posts

From Compliance to Culture: How to Make Plant-Level RCA Training Stick

Measuring Training Efficacy: KPIs for Your Global RCA Education Strategy

7 Steps to RCA Success: What High-Performing Teams Do After the Investigation Ends

How to Improve Your RCA Program: A Practical Guide for Reliability Leaders

Root Cause Analysis Software

Our RCA software mobilizes your team to complete standardized RCA’s while giving you the enterprise-wide data you need to increase asset performance and keep your team safe.

Root Cause Analysis Training